Research Direction

Designing Embodied Interaction for Accessible and Expressive Systems

I am interested in designing embodied interaction systems that treat the human body not only as an input interface, but also as a medium for shaping perception, experience, and social connection.

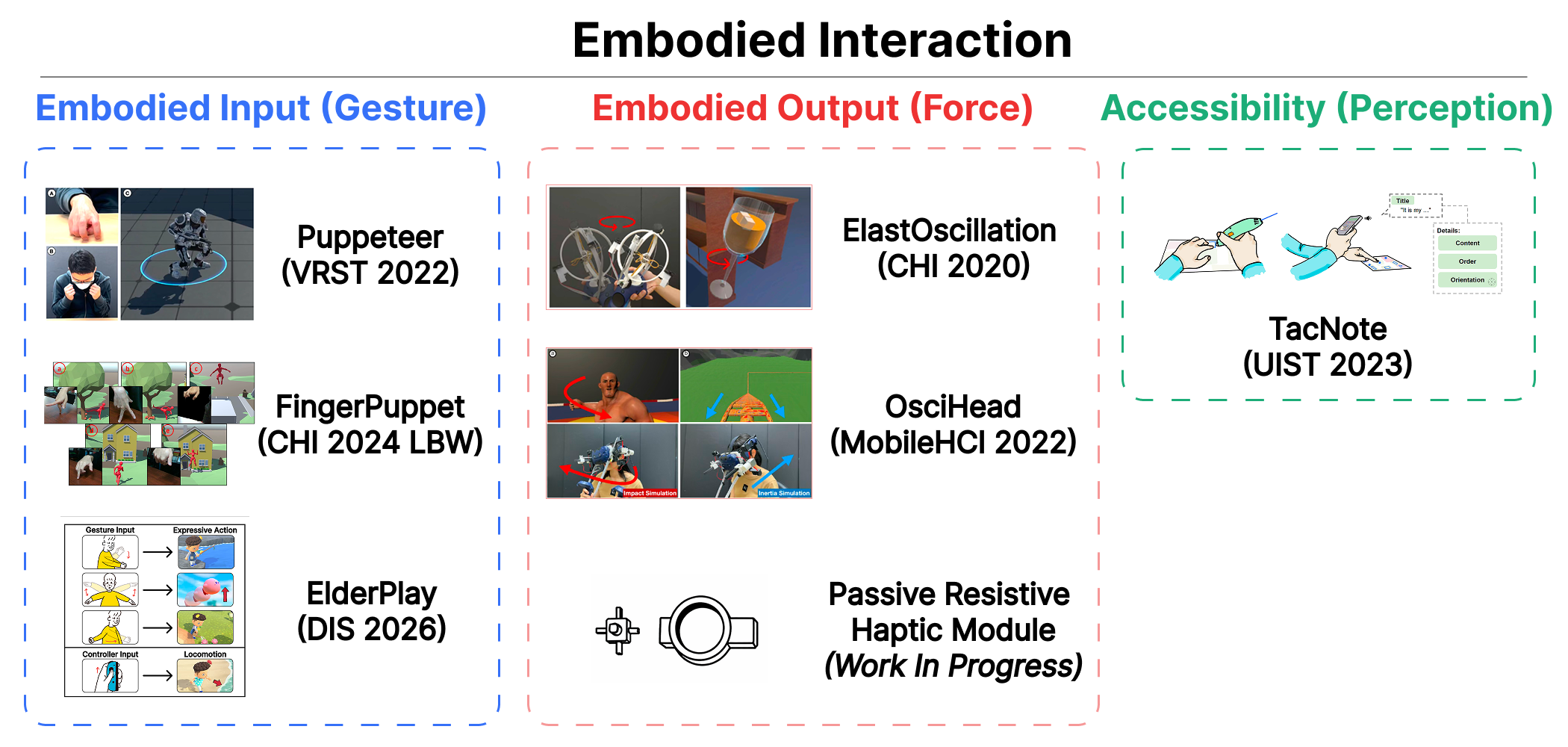

My research focuses on three complementary dimensions: embodied input (gesture), embodied output (force), and accessibility-oriented perception. Through gesture-based interaction, I explore how bodily movement can serve as intuitive and expressive input for virtual and physical systems. Through force and haptic feedback, I investigate how physical sensations can shape users’ perception, cognition, and behavior. Beyond interaction efficiency, I am also interested in how embodied systems can support accessibility and inclusive design by leveraging alternative sensory modalities and adapting interaction to diverse users’ abilities.

Across virtual reality, wearable devices, and everyday interactive systems, my work aims to create technologies that foster meaningful engagement, reduce barriers, and support shared experiences between people with different abilities and backgrounds. I believe embodied interaction can move beyond abstract control mechanisms and become a foundation for more inclusive, expressive, and human-centered computing.